I was in the Anthropic camp. Fully in. I thought they were the better company. More thoughtful, more principled. When people around me used ChatGPT, I'd quietly note that I use Claude (as if that said something about my character), and there was a little moral weight behind that choice I didn't fully admit to myself. Anthropic cares about safety. OpenAI moves fast and breaks things. Simple story. Comfortable story.

Fracture

Then Anthropic refused to let the Pentagon use Claude without restrictions on autonomous weapons and mass surveillance. The Department of War labeled them a national security risk. OpenAI signed a deal the same day. My timeline split cleanly in half. "This is why I use Claude." versus "Anthropic is naive, safety is just marketing."

I watched the takes pile up and recognized my own reflexes in them. Then I read Ben Thompson's piece, "Anthropic and Alignment," and it asked a question I hadn't thought to ask: who gave Dario the authority? If AI is as powerful as he himself keeps arguing it is, then Anthropic is building a power base that rivals the state. Why should an SF exec hold veto power over what a democratically elected government does with its military?

I sat with that for a while. I care about safety. I don't want autonomous weapons making kill decisions. But since when does a private executive get to override the state on those calls? That's a massive amount of power for one person to hold, and I'd never questioned it because I agreed with his position. The agreement had made the power grab invisible to me.

Schism

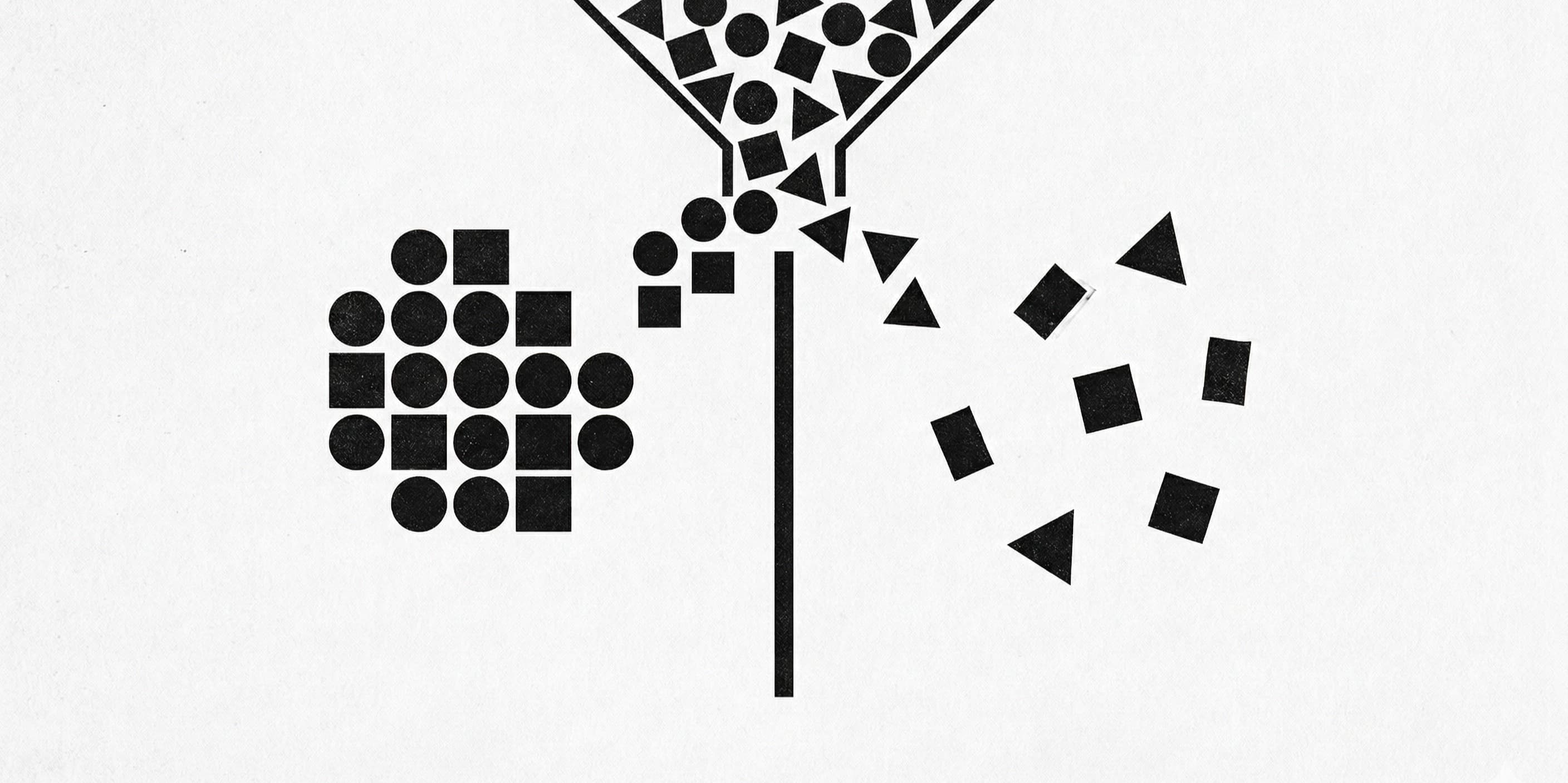

This is how belief systems form. Not with scripture. With a split. One group draws a line, another rejects it, and suddenly you have two tribes that define themselves mostly by not being each other. I've seen people say they use Anthropic because they care about the future of the world. I've seen people equate OpenAI with recklessness. Neither claim holds up to five minutes of scrutiny, but both feel true to the people saying them, and that's the point.

Identity doesn't need to be accurate. It just needs to feel earned. "I chose the harder, more ethical path" is one of the oldest stories humans tell themselves, and the AI tool you pay $20 a month for has become the latest vessel for it. This is how religions start. This is how political identities calcify. One split, two teams, and then the jersey matters more than the game.

I still use Claude. Probably will for a while. But I've stopped carrying it like a badge. The moment your tool preference starts whispering that you're one of the good ones, you should probably audit that thought the same way you'd audit any other unchecked assumption.